tl;dr

Google (including YouTube), Koo, Meta (Facebook and Instagram), ShareChat, Snap, Twitter and WhatsApp have released their reports in compliance with Rule 4(1)(d) of the IT Rules 2021 for the month of February, 2022. The latest of these was published by WhatsApp and was published on April 1, 2022. The reports contain similar shortcomings, which exhibit lack of effort on the part of the social media intermediaries and the government to further transparency and accountability in platform governance. The intermediaries have yet again, not reported on government requests, used misleading metrics and have not disclosed how they use algorithms for proactive monitoring. You can read our analysis of the previous reports here.

Background

Under Rule 4(1)(d) of the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 (‘IT Rules’), significant social media intermediaries are required to publish monthly compliance reports. In these reports, they are required to:

- Mention the details of complaints received and actions taken thereon, and

- Provide the number of specific communication links or parts of information that the social media platform has removed or disabled access to by proactive monitoring.

"Any other relevant information" can be specified and sought from significant social media intermediaries. Significant social media intermediaries, here, refers to those intermediates which have 50 lakh or more registered users.

In order to understand the impact of the IT Rules on users and the functioning of intermediaries, we examine and analyze the compliance reports published by Google (including YouTube), Koo, Meta (Facebook and Instagram), ShareChat, Snap, Twitter and WhatsApp to capture some of the most important information for you below.

Revelations made in the data on proactive monitoring:

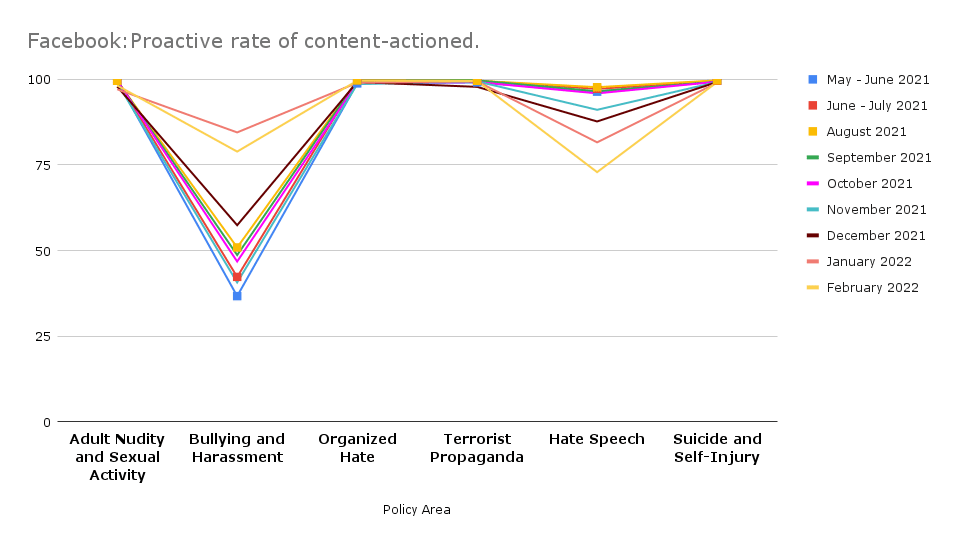

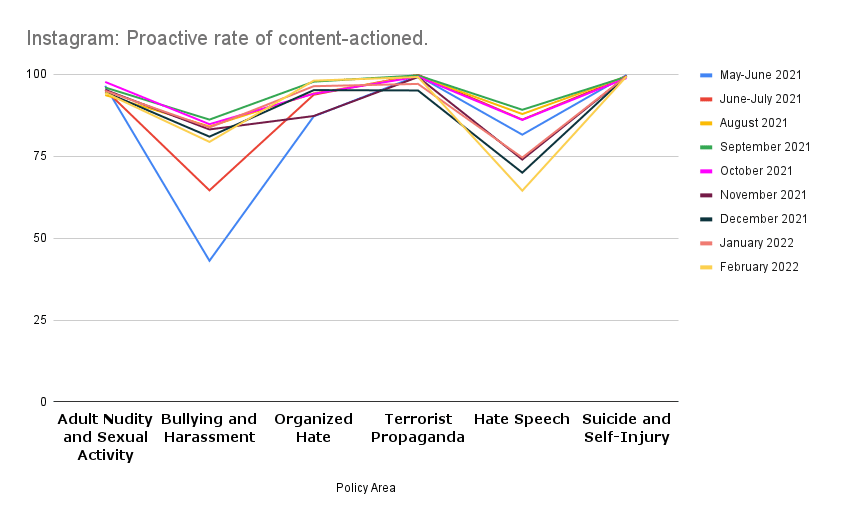

Meta (Facebook and Instagram) has not changed the metricts adopted for reporting proactive takedowns, despite disclosures made by Frances Haugen. In its report, Meta adopts the metrics of (i) ‘content actioned’ which measures the number of pieces of content (such as posts, photos, videos or comments) that they take action on for going against their standards and guidelines; and (ii) proactive rate which refers to the percentage of ‘content actioned’ that they detected proactively before any user reported the same. This metric is misleading because the proactive rate is measured as a percentage of only that content on which action was taken (either proactively or on the basis of user complaint). It does not show what percentage of all content on Facebook (which may otherwise be an area of concern) was proactively taken down. Such a metric neither reflects the efficiency of the proactive takedown mechanism nor discloses the process followed for undertaking automated takedowns. We have written more about this in our previous post.

Data on proactive monitoring for the other Social Media Intermediaries is as follows:

- Google’s proactive removal actions, through automated detection, slightly decreased to 338, 938, in February 2022, from 4,01,374 in January 2022. The figures stood at 4,05,911 in December 2021, 3,75,468 in November 2021, and 3,84,509 in October 2021.

- Twitter suspended 30,992 accounts on grounds of Child Sexual Exploitation, non-consensual nudity, and similar context; and 3,924 for promotion of terrorism. These numbers stood at 37,466 and 4,709, respectively, in January 2022.

- WhatsApp banned 14,26,000 accounts in February 2022, a decrease from 18,58,000 accounts in January 2022. The figures stood at 20,79,000 in December 2021, 17,59,000 in November 2021, and 20,69,000 in October 2021. These bans are enforced as a result of WhatsApp’s abuse detection process which consists of three stages - detection at registration, during messaging, and in response to negative feedback, which it receives in the form of user reports and blocks.

While reporting on proactive takedowns, all intermediaries are opaque about the process/algorithms followed by them for proactive takedown of content. Facebook and Instagram state that they use “machine learning technology that automatically identifies content” that might violate their standards, Google uses “automated detection process”, and Twitter claims to use “a combination of technology and other purpose-built internal propriety tools”. WhatsApp has released a white paper discussing its abuse detection process in detail and disclosing how they use machine learning. While WhatsApp has made an attempt to explain how it proactively takes down content, the lack of human intervention in terms of monitoring the kind of content that is taken down may lead to unrestricted censorship of lawful content (see the various petitions challenging content takedown before the Delhi High Court.)

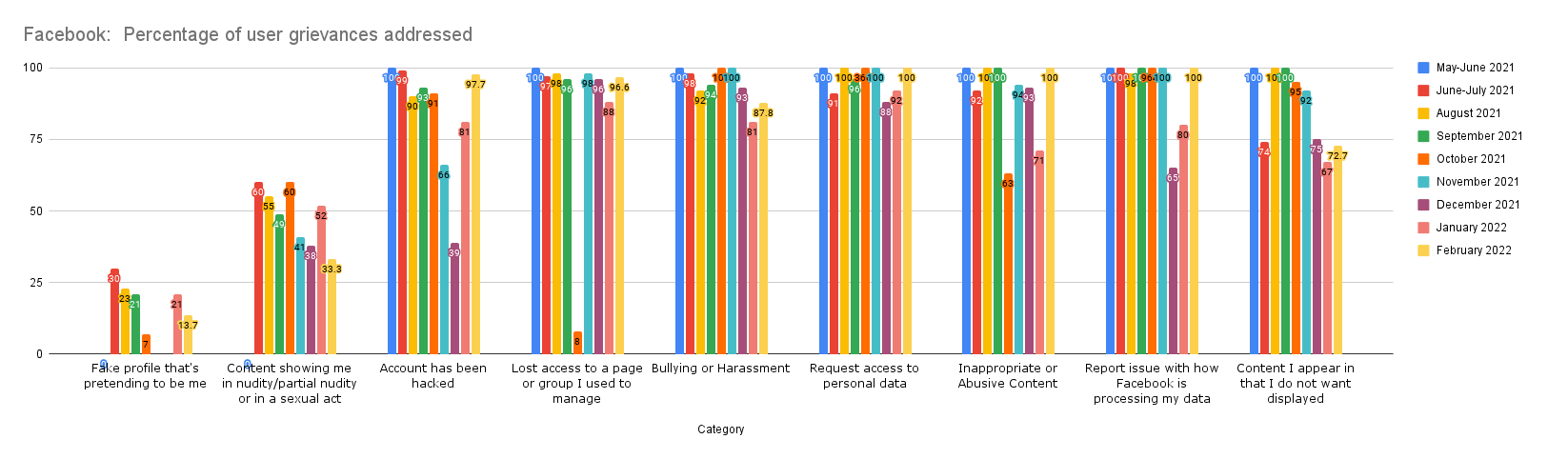

What does the data on the complaints received reveal?

As per their reports Google, Meta, Twitter and WhatsApp receive grievances via email or post addressed to their grievance officers. Facebook (and Instagram) and Google also state that complaints can be made through contact forms on their help centres and webforms grievance officer India page, respectively. Twitter users can also report directly from the tweet or the account in question when they are logged in. WhatsApp can be contracted through ‘contact us’ on the app.

In the case of Google, 97.1% of the complaints received and 99.9% of content removal action was taken for reasons of copyright and trademark infringement which is the same as the last report. We have written about the trend of ‘complaint bombing’ of content for copyright infringement on YouTube to suppress dissent and criticism here. Other reasons for content removal included, circumvention (60), court order (47), graphic sexual content (2), and impersonation (1). Content removals based on court orders slightly increased (31 in January 2022 and 37 in December 2021) after an increase in November, 2021 (56). The figure stood at 49 in October, 2021 and at 10, 12, 4, 6, and nil since the addition of this parameter to the report in May 2021.

For Twitter, the largest number of complaints received related to abuse/harassment (161), followed by impersonation (40), hateful conduct (18), misinformation (14), defamation (11) and I.P. related infringement (7). The largest number of URLs actioned related to abuse and harassment (471), sensitive adult conduct (50), hateful conduct (42) and I.P. related infringement (35). The number of complaints received for hateful conduct has slightly decreased to 18 in February 2022, after it increased to 20 in January 2022, from a decrease to 12 in December, 2021 and 13 in November 2021, from an all time high at 25 in October, 2021 (it stood at 6, 0, 12 and 0 in the previous months.)

What continues to be astounding is that zero complaints were received by Twitter for content promoting suicide, and for child sexual exploitation content. It is important to note here that India is a country with 23.60 million Twitter users. The smaller number of monthly complaints received on such prominent issues may indicate two possibilities: (i) the grievance redressal system is turning out to be ineffective, and (ii) Twitter is able to takedown most of such content proactively. Unless there are disclosures claiming the contrary, or audits are conducted into the platform governance operations of these social media intermediaries, there is no easy way to know which possibility weighs more.

For WhatsApp, 335 reports were received in total, out of which Whatsapp took action in 21 cases, all of which but 2 related to ban appeals i.e. appeals against banning of accounts. This number offers an interesting contrast with the number of accounts that WhatsApp banned proactively on the basis of its own abuse detection process - 14,26,000.

The aggregated data provided in the reports exhibits an intermediary’s own assessment. It does not disclose the merits of the underlying cases. This makes it difficult to evaluate the accuracy of takedown decisions, or spot any trends of inconsistent enforcement. Similarly, inferences cannot be made from the disclosure of the number of complaints received and the number of URLs taken down because one complaint may list any number of items.

Reports by Koo, ShareChat, LinkedIn and Snap Inc.

Koo publishes a one-paged report with 4 categories of information: (i) content reported, (ii) proactive moderation, (iii) spam content reported and (iv) spam proactive moderation. There are no sub-categories of issues on which reports were received or content was taken down (other than spam). The report does not mention the process followed for content takedown, or explain the metric followed for reporting. In February 2022, according to the report, 2,393 reports were received, out of which 439 were “removed” and 1,828 were “overlay-ed, blurred, ignored, warned, etc.”. In case of proactive moderation, 2,245 “content” was “highlighted”, 1,159 was “removed” and 686 were “overlay-ed, blurred, ignored, warned, etc.”

ShareChat in its report for February 2022, unlike other social media intermediaries, provides the number of requests from law enforcement authorities and investigating agencies. 11 such requests were received in February 2022, out of which user data was provided in 8 requests. Content was also taken down in 2 of these cases for violation of ShareChat’s community guidelines.

56,81,213 user complaints (as against 61,88,644 last month) were received which have been segregated into 22 reasons in the report. ShareChat takes two kinds of removal actions: takedowns and bans. As per the report, takedowns are either proactive (on the basis of community guidelines and other policy standard violations) or based on user complaints. Proactive takedown/deletion for February 2022 included chatrooms deletion (771), copyright takedowns (58,865), takedowns for reasons of comments deletion (87,076), user generated sexual content (1,88,290), and other user generated content (25,15,315).

ShareChat imposes three kinds of bans: (i) a user generated content ban, where the user is unable to post any content on the platform for the specified ban duration; (ii) an edit profile ban, where the user is unable to edit any profile attributes for the specified time-period; and (iii) a comment ban, where the user is banned from commenting on any post on the platform for the specified ban duration. The duration of these bans can be 1 day, 7 days, 30 days or 260 days. In case of repeated breach of guidelines, user accounts are permanently removed for 360 days. As a result, 9391 accounts were permanently terminated in February 2022.

Snap Inc. received 53,373 content and account reports through Snap's in-app reporting mechanism in February 2022. In 10,497 cases the content was enforced, and 7,153 unique accounts were enforced. Most reports continued to be related to sexually explicit content (23,026), followed by impersonation (18,158), spam (4,669), threat/violence/harm (4,416), harassment and bullying (3,770), regulated goods (2,636) and hate speech (700).

LinkedIn’s transparency reports contain global summaries of its community report and government request report. With respect to India, LinkedIn received 30 requests for member data from the government in 2021.

Existing issues with the compliance reports

The following issues undermine the objective of transparency sought to be achieved by these reports since their inception, and continue to persist in the reports for February 2022:

- Algorithms for proactive takedown: Social media intermediaries are opaque about the process/algorithms followed by them for proactive takedown of content. Only WhatsApp has explained how it proactively takes down content by releasing a white paper which discusses its abuse detection process in detail. The lack of transparency about human intervention in terms of monitoring the kind of content that is taken down continues to be a concern. The IT Rules under Rule 4(4) provide that social media intermediaries shall implement mechanisms for appropriate human oversight of measures deployed for proactive monitoring which includes a periodic review of any automated tools. None of the social media intermediaries have reported on such periodic reviews.

- Lack of uniformity: There is a lack of uniformity in reporting by the major social media intermediaries. Each intermediary has adopted its own format, and provided different policy areas or issues on which it takes down content. This makes it difficult to make an apples-to-apples comparison. The lack of uniformity is evidenced from the following: (i) the IT Rules were issued following a call for attention for “misuse of social media platforms and spreading of fake news”, but there seems to be no data disclosure on content takedown for fake news by any social media intermediaries other than Twitter, (ii) Google and WhatsApp have not segregated the proactive action taken into different kinds of issues, but have provided the total number of proactive actions taken by them, (iii) Twitter has identified only 2 broad issues for proactive takedowns as opposed to 13 and 12 issues identified by Facebook and Instagram, respectively (categories of child endangerment, and violence and incitement were added in the month of October.) Lack of uniformity makes it difficult for the government to understand the different kinds of concerns (as well as their extent) associated with the use of social media by Indian users.

- No disclosure of government removal requests: Even though compliance with the IT Rules does not mandate disclosure of how many content removals requests were made by the government, in order to truly advance transparency in the digital life of Indians, it is imperative that Social Media Intermediaries disclose, in their compliance reports, issue-wise government requests for content removal on their platforms.

How can the Social Media Intermediaries improve their reporting in India?

The Intermediaries can take the following steps while submitting their compliance reports under Rule 4(1)(d) of the IT Rules:

- Change the reporting formats: The social media intermediaries should endeavour to be truly transparent in their reporting in the compliance reports. They have been following a cut-copy-paste format from month to month showing little to no effort in overcoming the shortcomings and opacity in their reports. They must adhere to international best practices (discussed below) and make incremental attempts to tailor their compliance reports to further transparency in platform governance and content moderation.

- Santa Clara Principles must be adhered to: The social media intermediaries must incorporate the Santa Clara Principles On Transparency and Accountability in Content Moderation to their letter and spirit. The operational principles of version 2.0 focus on numbers, notice and appeal. The first part on ‘number’ suggests how data can be segregated by category of rule violated, provides for special reporting requirements for decisions made with the involvement of state actors, how to report on the flags received, and parameters for increasing transparency around the use of automated decision-making.

What more can the Government do?

Transparency by social media intermediaries in the compliance reports will enable the government to collect and analyse India-specific data which would further enable well-rounded regulation. For this, meaningful disclosure by the social media intermediaries is imperative. The Rules must be suitably amended to achieve transparency in a systematic manner. This can be achieved by prescribing a specific format and standard for reporting. The Santa Clara Principles can be used as a starting point in this regard. The US and the EU in their draft legislations have proposed platforms to report on how often proactive monitoring decisions are reversed, and may be used for guidance.

According to the FAQs issued by the Ministry of Electronics and Information Technology, (i) intermediaries have the discretion to decide the format for reporting, (ii) they can provide aggregate for complaints received without disclosing granular details of all cases, and (iii) they can provide number of communication links to report on proactive monitoring. The FAQs must be promptly amended to address the issues discussed in this blog.

Daphne Keller, who directs the Program on Platform Regulation at Stanford's Cyber Policy Center, says that it is time to prioritise what is sought in the name of transparency, that “researchers and civil society should assume we are operating with a limited transparency “budget,” which we must spend wisely – asking for the information we can best put to use, and factoring in the cost.” She has also prepared a list of operational questions which make it complicated for platforms to report, and are difficult for researchers to answer. You may find these questions worth pondering about! We urge the government to work in collaboration with policy and technology researchers and civil society organisations to improve transparency standards in India.

We will be back next month with a fresh set of analysis of reports. Stay tuned.

Important Documents

- Koo’s IT Rules compliance report for Feb 2022. (link)

- WhatsApp’s IT Rules compliance report for Feb 2022 published on April 1, 2022. (link)

- Google’s IT Rules compliance report for Feb 2022. (link)

- Meta’s IT Rules compliance report for Feb 2022 published on March 31, 2022. (link)

- Twitter’s IT Rules compliance report for Feb 2022. (link)

- ShareChat’s IT Rules compliance report for Feb 2022. (link)

- LinkedIn’s Government Requests Report for 2021. (link)

- Snap’s compliance Report for Feb 2022. (link)Our analysis of previous compliance reports. (link)